Vision & Goals

Win-Win Community in the Agent Era

True AGI is still a decade away. Agent is the essential path. Seize the wave to stay relevant.

Through deep practice, knowledge sharing, and project collaboration, break down technical barriers and transform them into personal advantages and real value.

Empower everyone, win the Agent decade.

What We Offer

- · Systematic learning paths and practice manuals

- · One-on-one guidance from technical mentors and industry experts

- · Paper notes, cutting-edge talks, case co-creation

- · Portfolio polishing, resume & interview coaching, referrals

Advanced Play

Deep Co-creation · Papers/Projects/Career Full Chain

If you want to get started with LLM Agents

- •Learning paths and open-source repo recommendations

- •Intro courses + hands-on projects

- •Like-minded exchange community

If you want further collaboration / papers / career

- •Paper collaboration and experiment co-building

- •Industry landing project cooperation

- •Big tech job referrals and interview coaching

If you seek partnerships

- •Co-build community brand

- •Joint promotion and events

- •AI product and training guidance

Learning & Exploration

Carefully curated learning resources to help you quickly master Agent core technologies

Advanced Path

Community Projects / Papers

Idea2Story

An Agent framework that automatically generates top-tier conference-level paper narratives from ideas. Trained on tens of thousands of top conference papers and their review data, teaching AI to master "Scientific Storytelling".

Talks & Roundtables · Paper Reading

重探 On-Policy Distillation(OPD):三类典型失败以及修复路径

在最近的大模型后训练中,On-Policy Distillation已经成为默认选项之一。 但研究者们在分析训练日志、实验曲线和对比不同 OPD 方法实现时,反复碰到同一个问题:理论上很自然的 sampled-token OPD,实际运行起来并不稳定,甚至会把模型往一些局部上“看起来合理”、整体上却

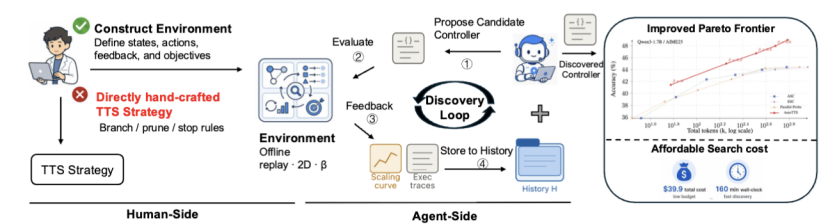

和翁家翌(OpenAI)一样的idea!如何让 AI 全自动刷榜——可能的下一个强化学习范式

作者:黄呈松,圣路易斯华盛顿大学博士生 在Deep Learning之前,我们在做传统机器学习,通过研究规律,写基于规则的代码来完成我们想要完成的任务。 在LLM之前,我们用深度学习来提取特征,设计网络来完成我们想要完成的任务 在LLM之后,我们似乎试图用LLM来解决所有的任务 但...我们真的达到

OpenAI 翁家翌:“启发式学习”的强化学习新范式

作者:翁家翌 原文:https://trinkle23897.github.io/learning-beyond-gradients/#zh Continual Learning 一直难以被解决,主要卡在神经网络的灾难性遗忘:学了新东西,旧能力就容易被冲掉。那如果不把目光只放在神经网络权重上,还有没

深度对话!2025 "青稞" AI 嘉年华,与 20+ 位青年科学家一起探讨AI 技术瞬间

本次活动专为青年科学家打造,旨在搭建一场 AI 技术的深度对话,来自学术和工业界的 20+ 青年科学家,将与大家一起回顾 2025,展望 2026!

TRPO重生:大模型时代的信任域策略优化

在大型语言模型的强化学习阶段,特别是RLHF中,我们追求策略的持续优化。本次分享深入探讨TRPO在LLM时代的应用。

从 π_0 到 π_RL:面向流匹配 VLA 的强化学习后训练框架

深入解析流匹配VLA的强化学习后训练框架π_RL,探索具身智能的前沿技术。

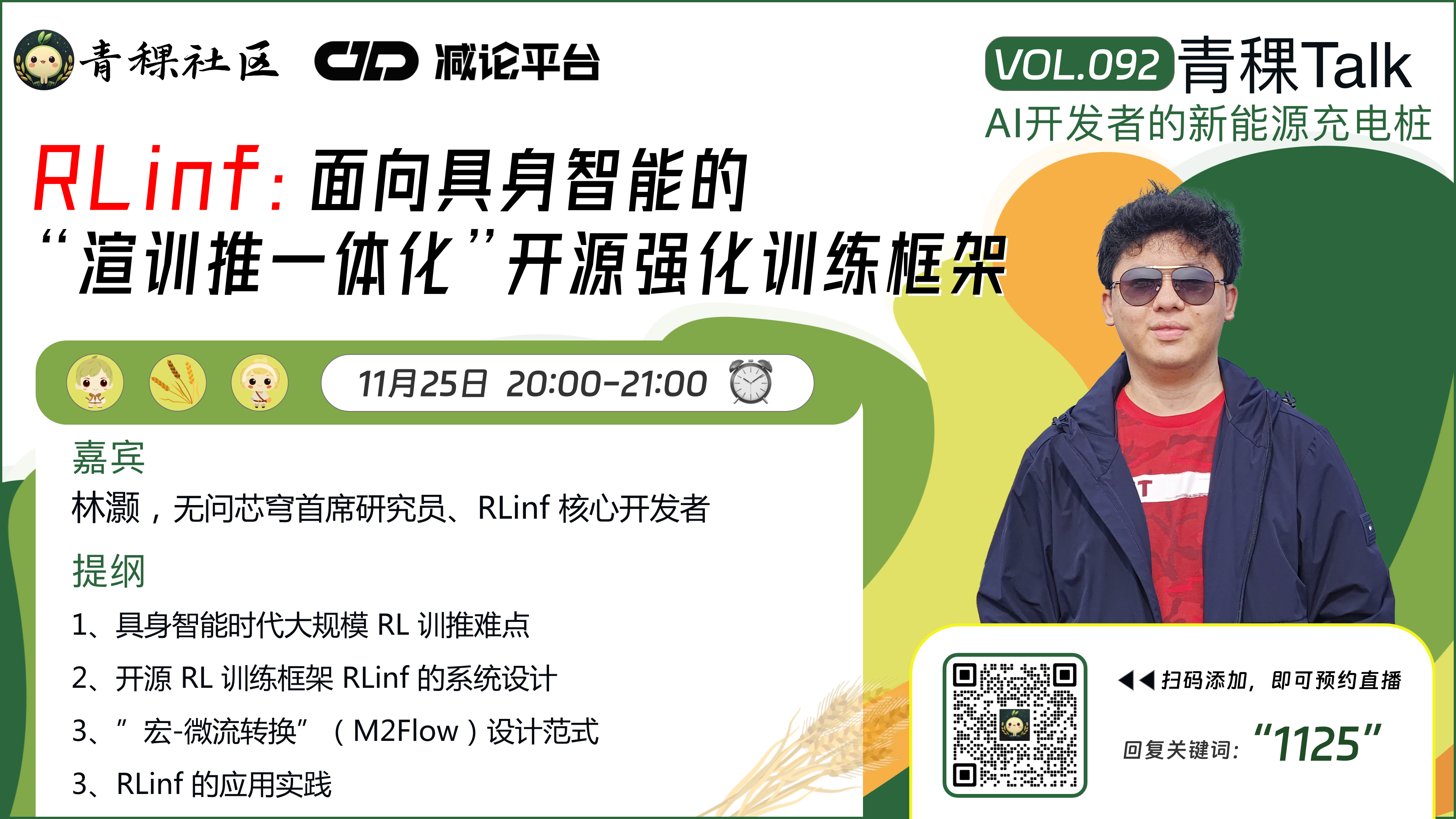

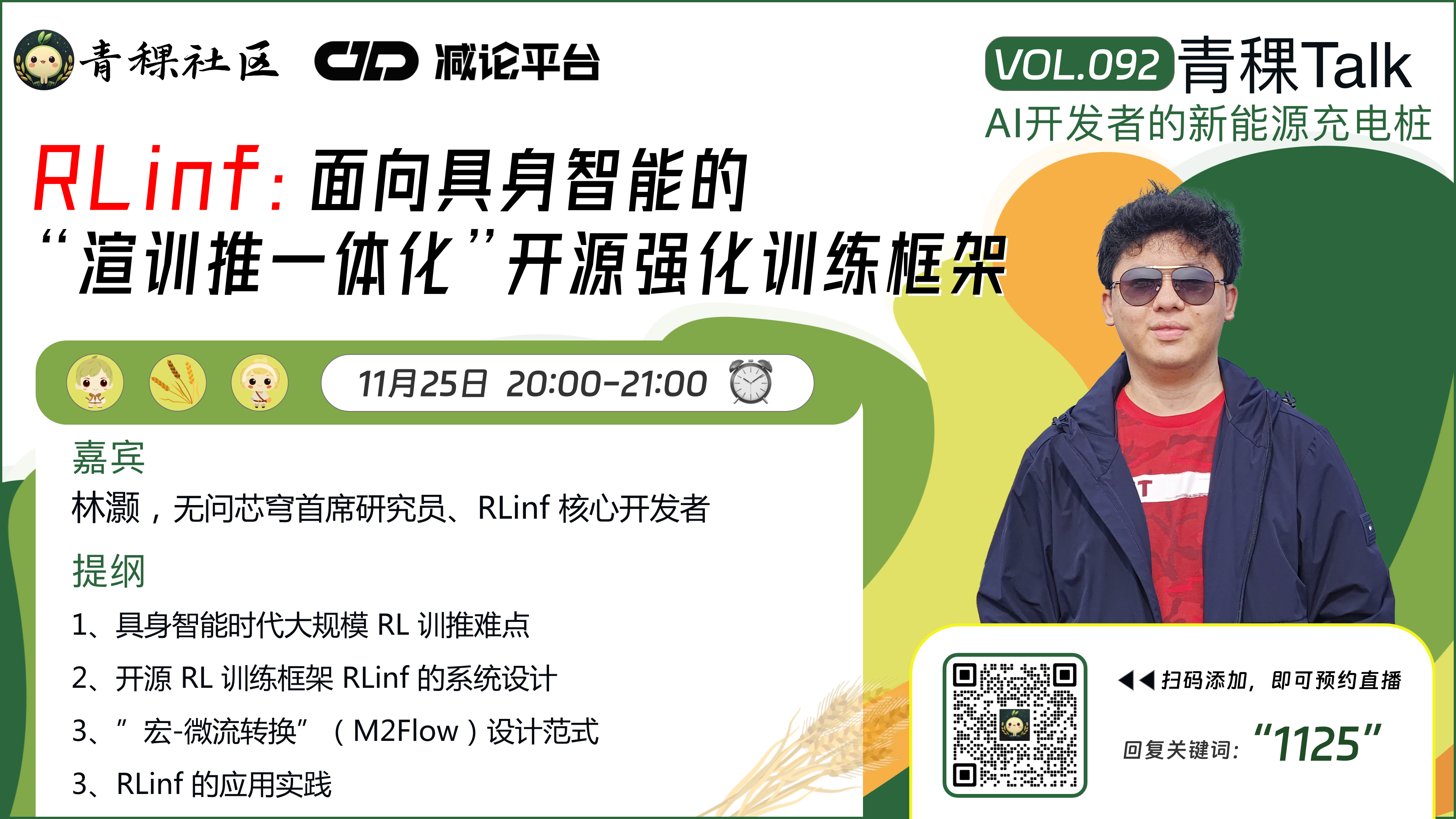

RLinf:面向具身智能的"渲训推一体化"开源强化训练框架

开源强化训练框架RLinf,实现渲染、训练、推理一体化,加速具身智能研发。

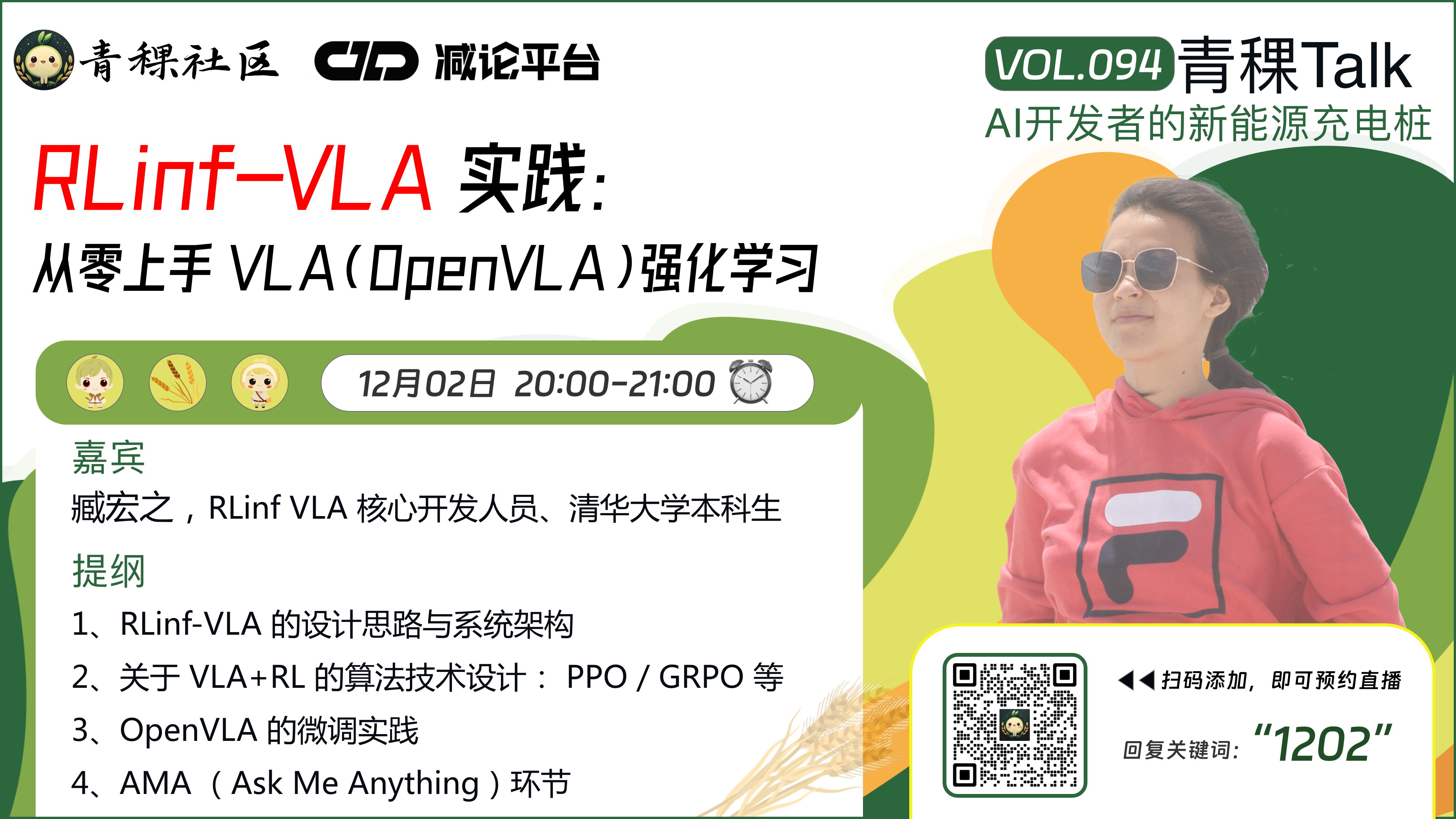

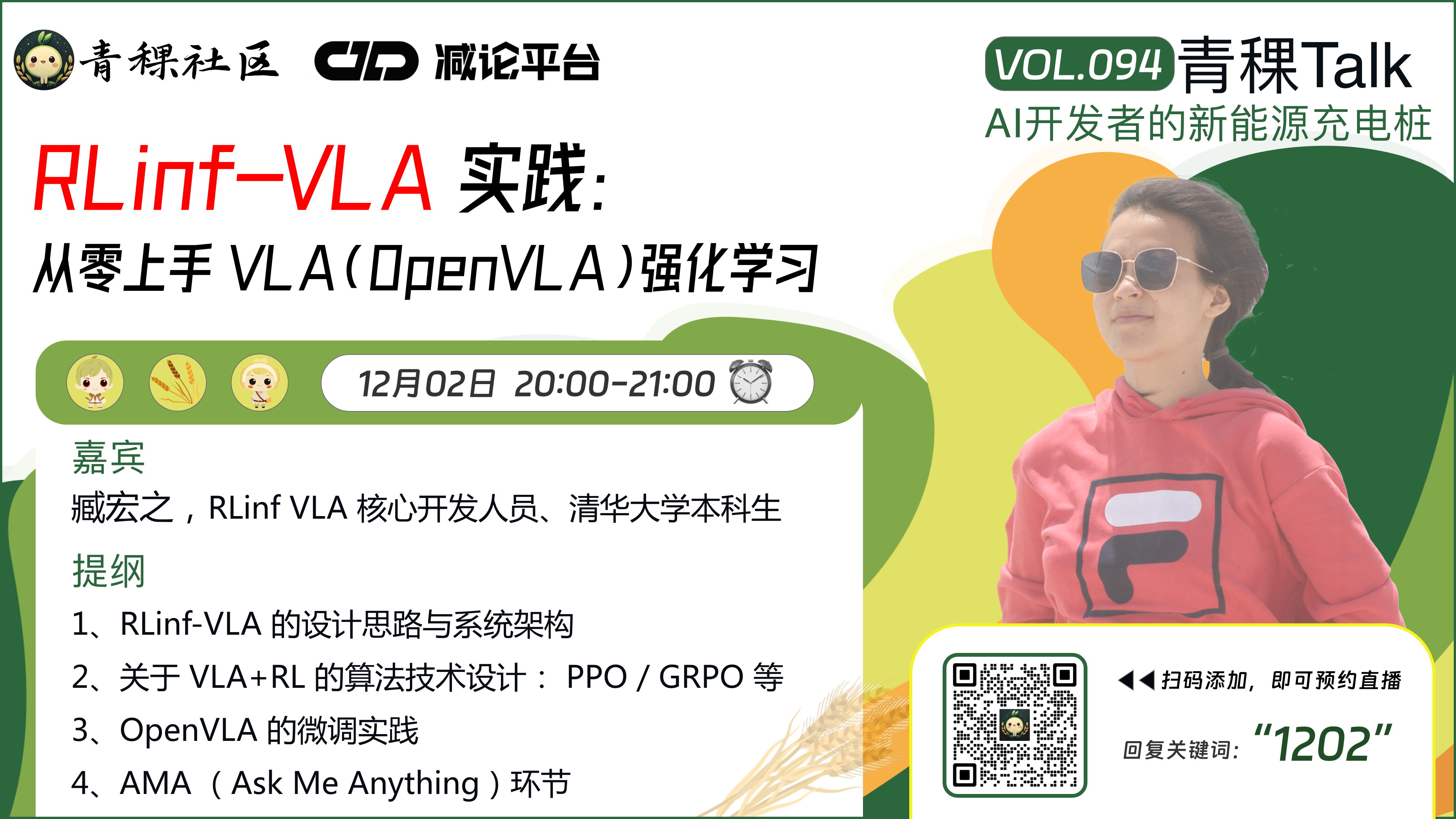

RLinf-VLA 实践:从零上手 VLA(OpenVLA)强化学习

手把手教你使用RLinf-VLA框架进行OpenVLA强化学习实践,入门具身智能开发。

深度对话!2025 "青稞" AI 嘉年华,与 20+ 位青年科学家一起探讨AI 技术瞬间

本次活动专为青年科学家打造,旨在搭建一场 AI 技术的深度对话,来自学术和工业界的 20+ 青年科学家,将与大家一起回顾 2025,展望 2026!

TRPO重生:大模型时代的信任域策略优化

在大型语言模型的强化学习阶段,特别是RLHF中,我们追求策略的持续优化。本次分享深入探讨TRPO在LLM时代的应用。

从 π_0 到 π_RL:面向流匹配 VLA 的强化学习后训练框架

深入解析流匹配VLA的强化学习后训练框架π_RL,探索具身智能的前沿技术。

RLinf:面向具身智能的"渲训推一体化"开源强化训练框架

开源强化训练框架RLinf,实现渲染、训练、推理一体化,加速具身智能研发。

RLinf-VLA 实践:从零上手 VLA(OpenVLA)强化学习

手把手教你使用RLinf-VLA框架进行OpenVLA强化学习实践,入门具身智能开发。

Join Us

Foundation Mastery

LLM/Multimodal fundamentals, code skills enhancement and engineering standards

Agent Architecture

Planning/Memory/Tool calling and evaluation, real business case breakdown

Project Co-creation

Hands-on project teaming, mentor Q&A and code review

Career Leap

Portfolio polishing, interview workshops, mentor recommendations and referrals

Deep Practice + Mentor Q&A + Project Co-creation

Portfolio polishing, code review, weekly retrospectives, referral recommendations. Limited spots per cohort to ensure interaction quality.

Partnership & Consultation

WeChat Official Account: AgentAlpha

Co-build community / Promotion partnerships / AI products / Training guidance, or need paper, project, career support, scan QR code to follow official account for more info.